The GEO industry is measuring the wrong thing. AI search is brand building, not performance marketing — and the data proves it. Here's what to do instead.

The question isn't 'how many clicks did AI drive?' — it's 'how many consideration sets did we enter?

This is for people who want to understand AI search honestly. Someone who sits in the middle of the "it's just SEO" and "it's totally new, nothing else matters any more" spectrum. Someone who has a sneaking suspicion that the network media agencies collapsing in share price wasn't because people suddenly decided they like making caricatures of themselves on TikTok.

If you want someone to tell you that all you need to "do AI search good" is to buy a prompt tracker and write a couple of listicles, you should leave.

This is a long, fairly dense read. If you're the sort of person who needs things in snack-able format, frankly I don't care about your opinions anyway.

The entire GEO industry, such as it is, has become obsessed with "prompt visibility", tracking whether your brand gets mentioned when someone asks ChatGPT a question. There are now dozens of tools that will sell you dashboards showing which prompts you "rank" for, what position you appear in, how your "AI visibility" compares to competitors.

And I think this is almost entirely bullshit. Everyone is assuming that AI search works like Google search: query leads to result, result leads to click, click leads to conversion, conversion leads to revenue. Nice clean funnel. Easy to measure. Satisfying to optimise. But what if that conversion path doesn't actually exist? What if we're measuring something that doesn't matter, because we don't know how to measure the thing that does?

The digital marketing industry has a hammer, performance metrics, attribution models, click-through rates, and so everything looks like a nail. AI search got called "search" so it must work like search, right? We'll just track it like we track Google and everything will be fine. Except it won't. And the data proves it.

Let's start with the basics. ChatGPT has over 900 million weekly active users as of late 2025[1]. That's a lot of people doing a lot of things. But what are they actually doing?

About 49% of usage is asking questions. 40% is work-related tasks. And 70%, the majority, is non-work usage: personal questions, curiosity, exploration, figuring things out[2]. People are asking "what's the difference between these two products" and "what should I look for when buying a mattress" and "explain this concept to me like I'm five." They are not, by and large, asking "where can I buy this thing right now and please give me a link." Your granny who thinks Google is the internet is not doing that, let's be real.

The IAB found that in AI shopping sessions, nearly 80% of people then visited a retailer or marketplace to validate their decisions, AI is research, not purchase[3]. Deloitte found that 56% of consumers use AI chatbots to compare prices, and 47% use them to summarise reviews[4]. This is all pre-purchase behaviour. This is people building mental models of what they might want to buy, not people buying things. Now here's where it gets interesting. What about the people who do click through from AI to a website?

Recent research from Ahrefs found that Google accounts for nearly 40% of all website traffic, while ChatGPT drives just 0.21%[5]. That's Google sending 190x more traffic to websites despite ChatGPT now having 12% of Google's search volume. Overall, AI referral traffic accounts for about 1% of all website visits[6]. And even Google's own AI features are keeping users in: 93% of AI Mode searches end without a click[7]. This is not a direct response channel. This is barely a traffic source at all.

But, and this is the crucial bit, when people do click through from ChatGPT, they convert incredibly well. ChatGPT referral traffic converts at 15.9%, compared to 1.76% for Google organic[8]. That's nearly 9x better. These are highly-researched, high-intent buyers who have done their homework and are ready to act.

So what does this tell us? The tiny sliver of people who click are incredibly valuable. But they're the tip of a very large iceberg. If you're getting 500 visits from ChatGPT at roughly 1% of the people who saw your brand mentioned, that implies something like 50,000 brand exposures you cannot see and cannot track. The question isn't "how many clicks did AI drive?", it's "how many consideration sets did we enter?" And if we infer from the sky-high conversion rates of "AI link clickers" that these people are very warm leads indeed, then it seems logical that AI is doing a great job of influencing purchase behaviour, we just can't measure it with Google Analytics.

The crazy thing is that this is exactly how television works. You don't measure TV by how many people immediately called the phone number at the end of the ad, no matter how many times you put a "Search [brand name]" CTA in your creative. (Lazy ad execs, you know who you are.) You measure it by brand recall and long-term sales lift. And if you tried to apply click-through rates to TV advertising, you'd conclude it doesn't work at all, which would be obviously wrong.

There's a framework from the Ehrenberg-Bass Institute that I think explains what's actually happening here, and it's not complicated, but it does require you to stop thinking like a performance marketer for five minutes. If you have ever planned or bought ATL activity, you can probably skip ahead a bit. The framework distinguishes between two things: mental availability and physical availability[9].

Mental availability is how easily your brand comes to mind when someone is in a buying situation. It's about salience, associations, memory structures, all the fuzzy brand stuff that performance marketers tend to dismiss as unmeasurable fluff. When someone thinks "I need running shoes," which brands pop into their head? Is it Nike, Adidas, or is it www.buybestrunningshoesnow.com? That's mental availability.

Physical availability is how easy it is to actually find and buy your product. Distribution, shelf space, search visibility, whether you show up when someone is ready to transact. Google Shopping has physical availability. Amazon has physical availability. The "buy now" button has physical availability. Coca Cola built a global empire following the London Rat strategy of physical availability: you are never more than 6 feet from one.

Here's the thing about AI search: it operates almost entirely in the mental availability space. When someone asks Claude "what are good running shoes for flat feet?" they are not buying. They are building a mental model of what they might buy later. They are seeding their consideration set. They are doing research.

As an anecdotal aside, this is exactly how I ended up buying a SURI toothbrush. I have never seen an ad for it in my life, because I am one of those media people who run about 3 different layers of Adblock software at all times. I asked Claude to help me find an electric toothbrush that actually works and doesn't charge me £100+ primarily for an app that they will stop supporting in 12 months, and its top recommendation was SURI. About a month later I went to https://www.trysuri.com directly to buy one. Their attribution model will say I was a "Direct" sale. I was not.

AI search has almost nothing to do with physical availability. ChatGPT conversations do not have "buy now" buttons (at least, not yet), and when they include links, most people don't click them. The transaction happens somewhere else, later, through a different channel. They might be including ads eventually, but their own guidelines have stated they won't work like traditional performance ads. Will that hold up to another quarter where OpenAI sets a new record for "most money lost by a company"? Maybe not, but right now this is the paradigm.

Ehrenberg-Bass also talks about the 95:5 rule: at any given moment, only about 5% of category buyers are actually "in market", ready to buy right now[10]. The other 95% are future buyers who are building mental structures that will influence their eventual purchase. Brands grow by increasing mental availability to the 95%, not just by converting the 5%.

If you're only measuring AI search by clicks and conversions, you're only measuring the 5%. You're ignoring the 95% where the actual brand building happens. And then you're concluding that AI doesn't work, when actually you're just measuring the wrong thing.

None of this is actually new. We've known for years that purchase journeys are messy and multi-channel, and that the place where someone does their research is often completely different from the place where they buy.

This is called the ROPO effect, Research Online, Purchase Offline, and the data on it is pretty clear and extremely well established[11]:

So think about what this means for AI search. Someone asks ChatGPT about Samsung phones. They get information, they build a mental model, they decide Samsung is probably what they want. Then they go to Currys, or they go to their mobile carrier's store, or they click and collect from Argos . The AI touchpoint is upstream and completely unattributable.

And the time lag makes it even worse. Major purchases take an average of 79 days to finalise[12]. Consumers engage with a brand 56 times before buying[13]. E-commerce customers average 90 days between purchases. Only 12% of people start collecting information just days before they buy. The FMCG performance marketing "see ad, click ad, buy thing" model doesn't hold for considered purchases. It never did. So why are we trying to force AI search into that model?

Okay, so AI search is more like brand building than direct response. But can't we at least track how often we get mentioned? Can't we measure our "prompt visibility" and optimise for that? In theory, sure. In practice, there's a fundamental problem: LLMs are not deterministic. They don't give the same answer twice.

This isn't a bug or a limitation, it's how they work. Even if you set the temperature to zero, which is supposed to make outputs more consistent, you still get variation because of batch size differences during inference, because models like GPT-5 use mixture-of-experts architectures that route to different networks, because floating-point arithmetic on GPUs isn't perfectly reproducible, because the same prompt with different preceding context produces different outputs[14].

But don't take my word for it. SparkToro and Gumshoe actually tested this[15]. They had 600 volunteers run 12 different prompts through ChatGPT, Claude, and Google AI a combined 2,961 times over November and December 2025. And what they found was damning: there's less than a 1% chance that any AI, if asked the same question 100 times, will give you the same list of brands in any two responses. For the lists to appear in the same order, you're looking at roughly 1 in 1,000.

Their conclusion? "Ranking position in AI responses lacks validity. Any tool claiming to track where your brand ranks is providing essentially random data." The GEO tools selling you "you rank #3 for this prompt" are selling you noise. The position is meaningless. At best, at absolute best, you can measure whether you appear at all, and even that requires running the same prompt 60-100 times to get statistically meaningful data. For most tools, they give you 100-200 prompts per month. Unless they are secretly running each one a hundred times, so your 100 prompts tracked becomes 10,000 API calls for them, you're looking at the random data Rand is warning about.

Prompt visibility tracking is methodologically broken because it's trying to measure a moving target. TV ratings measure actual eyeballs. Web analytics measure actual clicks. Prompt visibility measures a non-reproducible, non-deterministic phenomenon and pretends it's stable. At a visceral level, typing =RANDBETWEEN(1,10) into Excel would get you similar results and save you time and money.

The measurement infrastructure for AI search simply isn't there yet. We're in the "counting how many TVs are tuned in" phase, not the "measuring brand recall and long-term sales lift" phase. And people are building entire businesses on metrics that don't actually mean anything.

Here's the thing: television faced exactly the same problem. When TV advertising was new, it was completely unmeasurable by the direct response standards of the time. You couldn't track who saw your ad. You couldn't attribute sales to specific spots. You couldn't build a nice clean funnel from impression to conversion.

And yet TV advertising obviously worked. Brands that advertised on TV grew. The problem wasn't that TV didn't work, the problem was that the measurement frameworks of the time weren't designed for it. As John Wanamaker allegedly said, "Half the money I spend on advertising is wasted; the trouble is I don't know which half."

So we developed new ones[16]. Brand recall studies, where you ask people which brands they remember seeing ads for. Brand lift studies, where you survey people before and after exposure and measure changes in awareness, consideration, and preference. Marketing Mix Modelling, where you use statistical techniques to estimate how much of your sales can be attributed to different marketing activities over time.

None of these are click-through rates. None of them give you the satisfying immediacy of "I spent £1, I got £1.50 back." But they work. They've been refined over decades. And they've allowed brands to make sensible decisions about TV advertising despite the fact that you can't track a click.

TV advertising delivers approximately 23% brand awareness lift, compared to 9% for digital channels[17]. We know this not because we tracked the clicks, there are no clicks, but because we developed measurement approaches that are actually appropriate to the medium.

AI search needs the same treatment. We need brand recall studies that ask people which brands they remember being mentioned by AI. We need lift studies that measure whether AI visibility correlates with changes in brand health. We need to accept that some channels can't be measured at the transaction level, and that's okay, because we have other tools.

What we don't need is more tools that track prompt position as if it were Google rank. That's not measurement. That's performance theatre.

There's a body of research from the IPA Databank, thousands of marketing effectiveness case studies, that's been analysed extensively by Les Binet and Peter Field[18]. Their key finding is that the optimal split between brand building and sales activation is roughly 60/40: 60% of your budget and effort should go toward long-term brand building, 40% toward short-term activation. Old hat, for the two media people who cared enough to read this far.

Emotional campaigns, the ones that build brand associations and mental availability, drive long-term profit growth. Rational, performance-focused campaigns drive short-term sales but don't build brand equity. And brands that over-index on short-term activation, the "performance at all costs" approach, see declining effectiveness over time. They're burning through the brand equity that makes performance work in the first place.

The entire GEO industry, as far as I can tell, is trying to force AI search into the 40% activation bucket. Track the prompt visibility. Optimise the rankings. Drive the clicks. Measure the conversions. Maybe this is a power grab by SEOs who have seen budgets get compressed. Maybe it's a genuine belief that AI is a performance channel. Whatever it is, it's wrong.

Because AI search belongs in the 60% brand building bucket. It's mental availability. It's consideration set seeding. It's the fuzzy upstream stuff that eventually drives sales but can't be attributed at the transaction level. By treating AI search as performance, we're making it less effective as the brand-building channel it actually is.

Maybe this is what OpenAI realised already, why they are planning to sell glorified banner ads at a $60 CPM, why they don't seem to have any attribution or ad buying data platform planned. Or maybe they are just desperate for more VC funding. Regardless, as an industry we're applying the wrong frameworks, measuring the wrong things, and then concluding that AI "doesn't work" when actually we're just being stupid about how we think about it.

So if prompt visibility tracking is broken, and purchase attribution is structurally impossible, what can you actually measure?

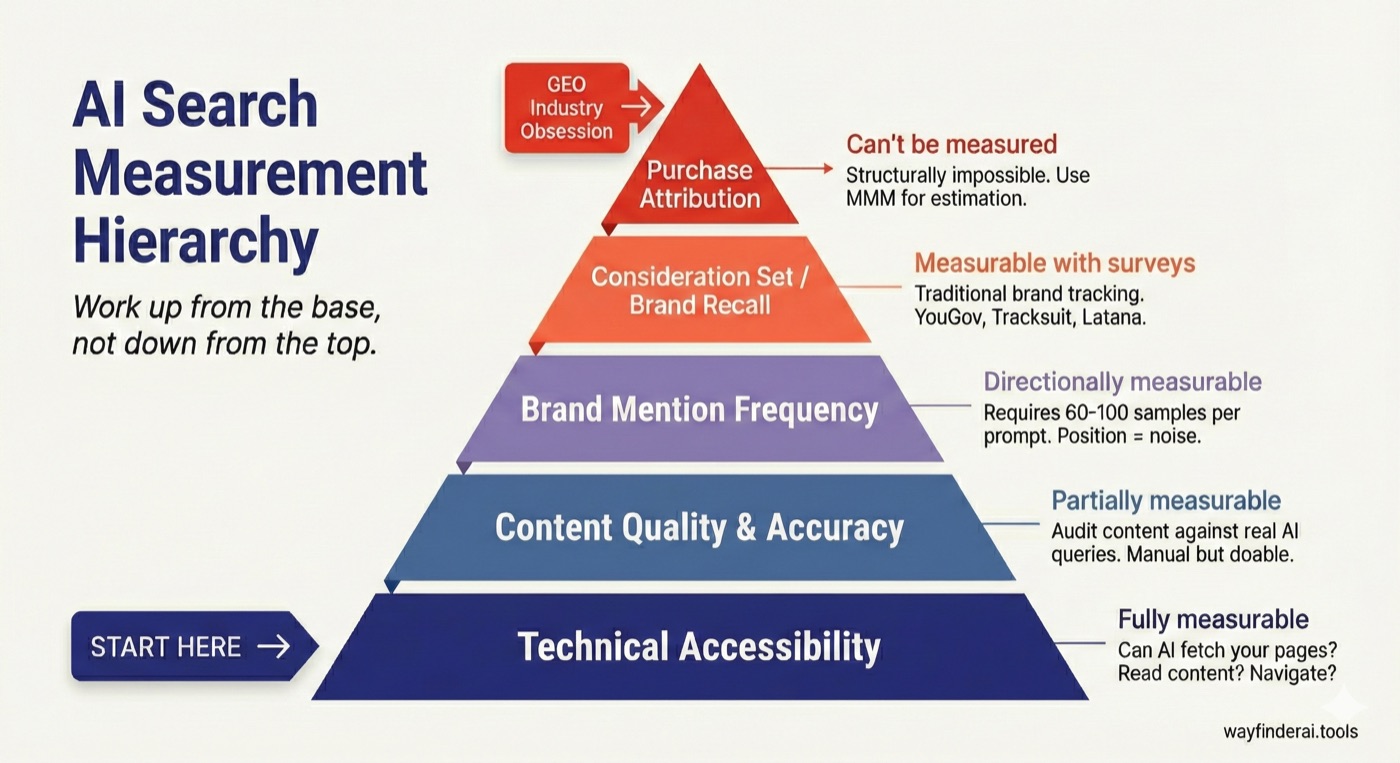

I think there's a hierarchy here, and the problem is that everyone is obsessed with the top of it while ignoring the bottom:

| Level | What | Can You Measure It? |

|---|---|---|

| 1 | Technical accessibility | Yes |

| 2 | Content quality and accuracy | Partially |

| 3 | Brand mention frequency | Sort of, with enough samples |

| 4 | Consideration set / brand recall | Yes, with surveys |

| 5 | Purchase attribution | No |

The GEO industry is obsessed with Level 5, the one thing that's structurally impossible. Meanwhile, most brands haven't even confirmed Level 1: can AI actually access their content at all? The smart approach is to work up from there not down from Level 5. Fix the foundation first. Then worry about the stuff that's harder to measure.

Before you worry about brand mentions or consideration sets, you need to answer a simpler question: can AI even access your content? This sounds basic, and it is, but you'd be amazed how many brands fail at it. Blocked by robots.txt. Returning 403 errors to AI user agents. Hiding critical information behind JavaScript rendering that basic fetchers can't execute. Burying pricing and product details four clicks deep when AI navigation mostly can't get past click one.

Let me tell you about AI.com[19]. Earlier this month, they ran a Super Bowl ad, $15 million for the slots, plus the $70 million they'd paid for the domain name. Eighty-five million dollars in total. The ad told people to go to AI.com and claim their handle. The site crashed within minutes. A company that claims to be "building infrastructure for AGI" couldn't keep a website running during the traffic spike they'd paid to create.

Here's the kicker: despite the crash, the ad was ranked the #1 performing Super Bowl commercial, generating 9.1x more engagement than the median spot. The brand building worked. They just couldn't convert any of it because the technical foundation wasn't there.

Look, I'll be honest: this is what my company, Wayfinder, is all about. This is why I founded it and built our first product, Compass. There may be others doing Level 1 but I haven't seen them. Compass checks whether AI can actually fetch your pages, read your content, navigate your site. Not optimisation. Diagnosis. "Are you even in the game?" We published our own research on how AI agents navigate websites and the results were sobering: even well-optimised sites fail basic navigation tasks more often than you'd think.

Level 2 is content quality: is your information correct and up-to-date? Does your content actually answer the questions people ask AI? Is your terminology aligned with how users phrase queries? This is what we're building next with Wayfinder Lens. Or you can do it yourself: audit your own content, test it with actual AI tools, see what comes back. It's not rocket science, it just takes time. Smart marketers like Mike King and Dan Petrovic are doing really interesting stuff in this space. So are lots of AI and ML researchers but I'm not sharing those because I don't want to lose my competitive edge...

These two levels are the technical foundation. They're measurable, they're fixable, and they're prerequisites for everything else.

Here's where I'm going to be honest with you in a way that most vendors won't be: Wayfinder does Levels 1 and 2. We don't do Levels 3-5, and we're not going to pretend we do. We also probably never will.

For Level 3, brand mention frequency, you want something like the SparkToro/Gumshoe approach. Run prompts 60-100 times, measure how often you appear. Not "you rank #3," that's noise. "You appear in 47% of responses", that's signal. Still noisy, but directionally useful with enough sample size. Talk to SparkToro or Gumshoe.

For Level 4, consideration set and brand recall, you want traditional brand tracking. "When you think of [category], which brands come to mind?" Pre/post surveys measuring awareness, consideration, preference. This is how TV has been measured for decades, and it works. Talk to YouGov, or Tracksuit, or Latana, or any decent brand tracking firm.

For Level 5, purchase attribution, I've got bad news: direct attribution is structurally impossible. The best you can do is Marketing Mix Modelling, which uses statistical techniques to estimate contribution over time. It requires scale, time series data, and acceptance that you're getting estimation, not measurement. Talk to Analytic Partners, or Nielsen, or Ekimetrics, or an in-house marketing science team if you're lucky enough to have one.

Why am I telling you to talk to other people? Because the GEO industry is full of vendors claiming to measure everything, and I think that's bullshit. I'd rather tell you what we actually do well and point you to people who do the other stuff well. That's more useful than pretending we've solved problems we haven't, and maybe even can't.

There's one last thing I want to address, and it's not about measurement at all. It's about who owns this stuff inside organisations.

Right now, AI visibility has mostly been grabbed by SEO teams, because "search" is in the name. But SEO teams think in performance metrics. They think about rankings and clicks and conversions. They don't have the media planning frameworks to understand that some channels are brand building, not direct response. So they're trying to measure AI search like Google search, and they're getting frustrated that it doesn't work, and posting diatribes against "GEO gurus" on LinkedIn.

Performance marketing teams have the same problem, only worse. They measure everything. That's what they do. The idea that some channels can't be measured at the transaction level is philosophically offensive to them.

PR and comms teams are actually closest to the right mental model, they understand earned media, they understand that you influence rather than control outcomes, they've never had click attribution and they've learned to live with it. But they don't have the technical skills to fix accessibility issues, and they're not usually in the conversation about "search" at all.

Brand teams get the brand building framing, but they lack the technical execution and search mechanics knowledge.

Here's the uncomfortable truth: AI visibility requires an integrated team. You need media planning and strategy thinking to understand that this is brand building, not performance. You need technical SEO ability to actually fix the accessibility issues. You need a PR and earned media mindset to accept that you influence, not control, outcomes.

No one discipline has all three. Most organisations have these skills siloed in different teams that don't talk to each other, competing for budget and arguing about attribution. That's the real problem.

If you're an SEO, you need to learn media planning. If you're in media, you need to understand technical accessibility. If you're in PR, you need to get comfortable with data. Or you need to build a team that spans all three and actually coordinates.

This is a new discipline. It doesn't fit neatly into existing org charts. It is also not going away. The amount of people I seem to see online with their heads in the sand about how much of a dramatic, and persistent, change AI represents have clearly not been paying attention. If you have got this far and are not convinced, go read the viral Matt Shumer essay and reflect on the points its trying to make.

The companies that figure out how to do this stuff well will have an advantage. The ones that keep fighting about who owns the budget will keep arguing about measurement while the world moves on without them.

I've thrown a lot of data and frameworks at you, so let me try to make it concrete.

If you're an SEO: Your skills are still valuable. Maybe more so than ever. Technical accessibility is a real problem that needs fixing, and you're the one who knows how to fix it. But you need to reframe what success looks like. It's not "we rank #1 for this prompt", that doesn't mean anything. It's "AI can access our content, our content answers the questions people actually ask, and our brand health metrics are improving over time." Stop arguing about prompt visibility tools and start fixing the technical foundation. Go get a Kantar account, buy some stupid chinos, and read The Long and the Short of It.

If you're in media or strategy: This is your domain. You already know how to measure brand building without click attribution. You've been doing it for TV for decades. Apply the same frameworks here: brand recall, lift studies, consideration set research, Marketing Mix Modelling for the really sophisticated stuff. But you can't skip the technical foundation. Partner with someone who can audit accessibility, because none of your brand strategy matters if AI can't actually retrieve the content. Sign up to Codecademy, install Claude Code, add "Python ninja" to your skills on LinkedIn.

For everyone: Work up the hierarchy, not down.

Stop fucking arguing about what to call it, GEO, AIO, AI search, whatever, and actually start working together to nail this for your clients before someone else figures it out and steals their lunch.

Look, I know some people will read all this and think I'm wrong. So let me address the objections I expect to get.

"But we need metrics to justify investment."

Yes, you need metrics. But you need appropriate metrics. TV has metrics: brand recall, lift studies, MMM. Just not click-through rates. The absence of click attribution doesn't mean the absence of measurement. It means you need different measurement.

"Prompt visibility correlates with traffic."

Maybe? Correlation isn't causation. And even if true, you're measuring the tip of the iceberg. The 1% who click are interesting, but they're not the whole story. You're ignoring the 99% who didn't click but might have been influenced anyway.

"This sounds like giving up on measurement."

No. It's choosing the right measurement. Brand recall studies exist. Lift measurement exists. MMM exists. I'm not saying don't measure, I'm saying stop pretending you can measure something that can't be measured, and start measuring the things that can.

"Performance marketers won't accept this."

That's their problem. Reality doesn't conform to org charts. If performance marketers want to keep insisting that AI search must work like Google search because it has "search" in the name, they're going to keep getting frustrated. The data doesn't care what they accept.

"You're just saying this because you sell an accessibility tool."

You've got the order wrong. I'm not saying this because I have something to sell, I built something to sell because I believe this. I'm putting my money, my time, my reputation into something I think is a genuine gap. It would be easier to make prompt tracker #459 and sell it on the basis of my experience and connections. There's clearly demand for that. Instead I made a tool nobody is asking for because I think they're asking the wrong questions. Make of that what you will.

"What about AI agents that will actually transact?"

Agents have been transacting for years. Disagree? What about Alexa? Remember all those stories about kids ordering stuff with their parents' Echo devices? No, you don't, because everyone immediately switched that capability off. Nobody trusts AI with their credit card. Whether the tech is there or not, we're a solid decade away from broad consumer adoption. Chip and pin took years to be perceived as safe even though it's literally vastly more secure than magnetic stripe. You think people are going to blindly hand their credit card to ChatGPT in the millions? And even when they eventually do, the research and consideration phase still happens first. Mental availability still matters.

"This is just cope from someone who can't measure it."

I don't really care what other companies do, or what agencies do. If they want to do something different, fine. But I think they're leaving money and results on the table. Measure, don't measure, I don't give a shit. There's a reason I got out of the direct consultant game to make a SaaS business. I'd rather build tools for people who get it than try to convince skeptics one painful meeting at a time.

"What about Perplexity and SearchGPT with citations?"

These are closer to traditional search, I'll grant you. They include links, they drive some clicks. But look at the data: still roughly 1% of traffic, still 93% no-click. The citations are usually "source" not "buy here." And even when someone clicks through from Perplexity, they're at the research phase, not the purchase phase. It's marginally more measurable, but it's still fundamentally brand building.

"Small businesses can't afford brand tracking surveys."

Fair. If you're an SMB, focus on Levels 1 and 2, which are affordable, and use ChatGPT referral traffic trends in GA4 as a directional proxy for Level 3. Accept that you're flying partially blind, like you already do with word-of-mouth. Not everything needs enterprise-grade measurement to be worth doing.

ChatGPT usage data, late 2025. Reported by The Information (Dec 2025) and confirmed by Sam Altman announcing 800M WAU in October 2025 (TechCrunch), rising to ~900M by December. ↩

OpenAI / NBER working paper, "How People Are Using ChatGPT," September 2025. Analysis of 1.5 million conversations. Full paper: PDF (OpenAI). ↩

IAB / Talk Shoppe, "When AI Guides the Shopping Journey: Opportunities for Marketers in the Age of AI-Driven Commerce," October 2025. Press release. ↩

Deloitte, "2025 Holiday Retail Survey," 2025. 56% plan to use AI chatbots to compare prices; 47% to summarise reviews. ↩

Patrick Stox, Ahrefs, "ChatGPT Has 12% of Google's Search Volume but Google Sends 190x More Traffic to Websites," February 2026. ↩

Search Engine Land, "AI drives 1% of traffic, mostly from ChatGPT," 2025. ↩

Momentic Marketing, "Google AI Mode Click-Through Rate Analysis," 2025. ↩

Seer Interactive, "Case Study: 6 Learnings — How Traffic from ChatGPT Converts," 2025. Note: this is a single-site case study. Similarweb's broader dataset (3rd Annual Global Ecommerce Report, 2025) found ChatGPT converts at 11.4% vs 5.3% for organic — directionally consistent but less dramatic. ↩

Byron Sharp, How Brands Grow (Oxford University Press, 2010), based on research from the Ehrenberg-Bass Institute for Marketing Science. ↩

The 95:5 rule is from Ehrenberg-Bass / John Dawes research on category purchase cycles. See also LinkedIn's summary of the 95:5 rule. ↩

ROPO data compiled from multiple sources: GE Capital Retail Bank Shopper Study (2013, 81% research online); Klarna consumer surveys; Harvard Business Review study of 46,000 consumers; Dinmo, Center.ai, and Clerk.io research guides. Poland study 2021–2023 on electronics/appliances. ↩

GE Capital Retail Bank's Second Annual Shopper Study, 2013. Survey of 3,220 consumers who made purchases of $500+. Average 79 days research across 12 product categories; range of 40–137 days. ↩

Westernberg, E. (2010). Commonly cited as "56 touchpoints between inspiration and transaction." Corroborated directionally by Google/Ipsos research and B2B studies showing 20–60+ touchpoints depending on purchase complexity. ↩

LLM non-determinism is well-documented in ML research. Key factors: batch size effects during inference, Mixture-of-Experts routing variability (e.g. GPT-4/5 architecture), floating-point arithmetic on GPUs, and context-dependent output variation. See also Hugging Face: When Are LLMs Deterministic? ↩

SparkToro and Gumshoe.ai, "New Research: AIs Are Highly Inconsistent When Recommending Brands or Products," December 2025. ↩

History of TV advertising measurement. Key milestones: Nielsen Audimeters (1950s), people meters (1987), brand recall studies (1960s onwards), Marketing Mix Modelling (1980s onwards). See Nielsen's history for timeline. ↩

Nielsen cross-media effectiveness research: TV advertising delivers ~23% brand awareness lift versus ~9% for digital channels. See also AdWave's summary of TV brand awareness effectiveness (Q4 2025) and Simulmedia's brand lift analysis. ↩

Les Binet and Peter Field, The Long and the Short of It: Balancing Short and Long-Term Marketing Strategies, IPA, 2013. Based on analysis of the IPA Databank of marketing effectiveness case studies. ↩

AI.com Super Bowl coverage: Tom's Hardware and IBTimes, February 2026. ↩