The advertising industry has spent years claiming AI will kill creativity. The data tells a different story: most creative work was already mediocre, and AI just made that impossible to ignore.

The slop is coming from inside the house.

Here's a take that's going to upset a lot of people who work in advertising: you are probably not as creative as you think you are. And I don't mean that in the motivational-poster "we can all be more creative!" sense. I mean it in the hard, evidence-backed, our-industry's-own-data-proves-it sense.

The advertising and marketing industry has spent the last two years in a panic about AI killing creativity. There have been think pieces, conference panels, open letters, LinkedIn jeremiads from creative directors who suddenly care very deeply about the soul of the craft. The argument, roughly, is that AI produces slop, that it homogenises output, that it will destroy the magical human spark that makes great advertising great.

And I think most of this is copium. Not because AI isn't capable of producing mediocre work, it absolutely is, but because the people making these arguments are conveniently ignoring that the industry was already drowning in mediocre work long before anyone had heard of GPT-4. AI didn't break creativity. It just made the existing problem visible by giving everyone access to the same level of competent-but-unremarkable output that most professionals were already producing.

Here's my conclusion, stated bluntly so you can decide now whether to keep reading or close the tab: AI raises the floor on competent work so high that only genuinely exceptional output stands out. And most of what the "creative industry" produces isn't exceptional, it's competent. That was true before AI. It will be true after. The difference is that now there's data, and the data doesn't care about your feelings.

Before we get anywhere near artificial intelligence, let's talk about the baseline. Let's look at what the advertising industry produces when left entirely to its own devices, measured by its own standards, judged by its own peers.

The IPA Effectiveness Awards are arguably the most rigorous benchmark in advertising. They don't just judge whether an ad looked nice or won a popularity contest, they require hard evidence that the work actually drove business results. The IPA Effectiveness Databank contains over 1,600 case studies going back to 1980[1], and it's the foundation for most of what we know about what makes advertising work.

In the 2024 cycle, which was the highest entry count in three decades at 79 submissions, they awarded 28 prizes[2]. Only 30 agencies in the entire UK hold IPA Effectiveness Accreditation. Thirty. Out of thousands of agencies operating in one of the world's most mature advertising markets.

But the IPA is selective by design, so let's look at the volume shows. Cannes Lions received 26,900 entries in 2025[3]. Of those, 852 won Lions. That's roughly 3%. And remember, these aren't random samples of all advertising produced that year, these are the campaigns that agencies were proud enough to enter, that they thought represented their best work, that they paid real money to submit. The pre-filtered cream of the crop, and 97% of it still didn't make the cut.

D&AD, which to be fair is the most selective show in the industry and will genuinely award no prize at all if nothing meets the standard, received 11,689 entries in 2025[4]. They awarded 668 Pencils total, including just 3 Black Pencils. Overall win rate: 5.7%. The One Show: 20,038 entries in 2024, 1,790 awards, win rate of roughly 8.9%[5]. Over 91% of submitted work wins nothing.

These aren't obscure benchmarks. These are the industry's own gold standards for creative excellence. And by every single one of them, the vast majority of work that professionals produce, including the work they were proud enough to enter, doesn't make the cut. The work that was never entered, the stuff that the agencies themselves didn't think was award-worthy, is by implication even further from excellence.

So when someone tells me that AI is going to destroy creativity in advertising, my first question is: which creativity, exactly? The 3% that wins at Cannes, or the 97% that doesn't? Because I think we're talking about very different problems.

I want to break from the data for a moment because I think there's a question that the awards numbers don't answer: why is most advertising mediocre? Is it because the people making it aren't talented? I don't think so. I think it's because the process by which ideas are generated and selected in most agencies is structurally designed to produce safe, derivative work.

I've spent years in strategy rooms where "creative thinking" means the most senior person in the room repeating whatever they heard at their last lunch-and-learn with a media owner. There's a principle I use when evaluating strategy: real strategy is defined by what you choose not to do, not what you do. Most "strategy" in advertising doesn't work this way in practice. It's seniority plus recency bias dressed up in frameworks, and the output is predictably average because the process rewards consensus, not originality.

Strategic brainstorms default to the loudest voice from the most senior person, not the best idea. I've spent time experimenting with restructured brainstorming, asynchronous ideation, anonymous submission to remove person bias, dedicated time for individual thinking before group sessions. These approaches are well-documented. They're aligned with accessibility and DE&I principles. They produce better results. And they're almost never used, because the industry isn't serious about maximising creative quality, it's comfortable with the existing power dynamics that produce mediocre consensus ideas.

My dad has a saying: you can't bullshit a bullshitter. I've been on the inside. I know how the sausage gets made. And when I hear people mourning the "sacred creative process" that AI is supposedly destroying, I think about all those brainstorms where the CEO's first idea became the campaign because nobody in the room had the seniority or the job security to say "that's derivative and we can do better." AI isn't killing a sacred process. It's threatening a comfortable one.

To be fair, there are agencies where this doesn't apply, and the evidence is in the awards they win. Those places are genuinely in the elite 3%, and when they have something to say about AI and creativity, it's worth listening, because they've earned the right by doing everything else well. But they are the exception, not the rule, and most of the people shouting loudest about AI are not from those agencies. VCCP's Bernadette won five Cannes Lions with "Daisy the AI Granny"[13] — a scam-baiting campaign literally made with AI. The elite aren't mourning creativity. They're too busy using these tools to win awards.

It's not just that creative work doesn't win awards, a huge proportion of it doesn't even perform at a basic level. System1 has tested over 120,000 ads through their Test Your Ad platform, and their data is stark: 60% of all ads tested score just 1-Star or 2-Star on their effectiveness scale[6]. Only 4% achieve a 5-Star rating. And the bottom of the scale isn't just "not great", it's functionally useless: 1-Star ads achieve effectively zero long-term brand growth, and 2-Star ads generate just 0.5% share growth, barely distinguishable from doing nothing at all.

This is the industry's own testing framework, built by an organisation that the industry respects and pays real money to use. And it says that the majority of advertising, produced by professionals, briefed by strategists, signed off by clients, is achieving essentially nothing.

WARC's analysis of 1,394 case studies found that profit ROI among campaigns considered successful enough to submit has improved over the last few years from 1.9:1 to a staggering 2.5:1[7]. These are the best campaigns, the ones people put forward as evidence that their work performs. Even the industry's showcase barely doubles its money. And over a third of marketers, 34.2%, say their company rarely or never measures marketing ROI at all[8]. The majority of the industry is flying blind on whether their work even works.

People are perfectly capable of being unimaginative and ineffective without any help from artificial intelligence. This is not an AI problem. This is a people problem, and it predates every large language model by decades.

If the current state is bad, the trajectory is worse. Peter Field's "The Crisis in Creative Effectiveness," published through the IPA and Cannes Lions in 2019, analysed 24 years of IPA Databank data and found something genuinely alarming[9]. This was 2019. The most impressive thing AI could do was beat people at board games. ChatGPT was three years away from existing.

Between 1996 and 2008, creatively awarded campaigns were 12 times more efficient than non-awarded work at driving market share. Twelve times. That's an extraordinary multiplier, and it's the single strongest argument for investing in creative quality. But between 2006 and 2018, that multiplier collapsed to just 4x. Still meaningful, but a two-thirds decline. At the same time, the proportion of short-term campaigns, work evaluated over six months or less, rose from around 10% in 2002 to 25% in 2018. The industry was simultaneously getting less creative and more short-termist, and the two trends were reinforcing each other.

Field's own warning, published in 2019, before the current AI panic, was blunt: "Despite our warnings, the misuse of creativity has continued to grow and the effectiveness advantage has continued to decline." The creative industry was already losing its edge. Award-winning work was becoming less meaningfully differentiated from average work. The effectiveness multiplier that justified premium creative investment was eroding year on year.

And then along came AI, and everyone decided that this was the thing killing creativity. Not the decade-long collapse in creative effectiveness that preceded it. Not the structural incentives that reward safe work. Not the short-termism that Field had been warning about for years. No, it must be the robots. Much easier to blame a technology than to look in the mirror.

So we've established that the industry was already mediocre by its own standards, already producing mostly ineffective work, already on a declining trajectory before AI entered the conversation. Now let's look at what AI actually does, because the science is fascinating and it doesn't say what most people think it says.

The most important study on AI and creativity that almost nobody in advertising has actually read is Doshi and Hauser's 2024 paper in Science Advances[10]. They ran a properly controlled experiment: 293 writers, 600 independent evaluators, 3,519 blind evaluations. Writers were split into three conditions: human-only, human with one AI-generated story idea, and human with five AI-generated ideas. Before writing, every participant completed the Divergent Association Task, a validated measure of inherent creative ability.

The headline numbers look like good news for AI: stories written with access to five AI ideas were rated 8.1% higher on novelty and 9.0% higher on usefulness compared to human-only stories. They were also rated as better written, more enjoyable, and less boring. AI made the writing better. Argument over, right?

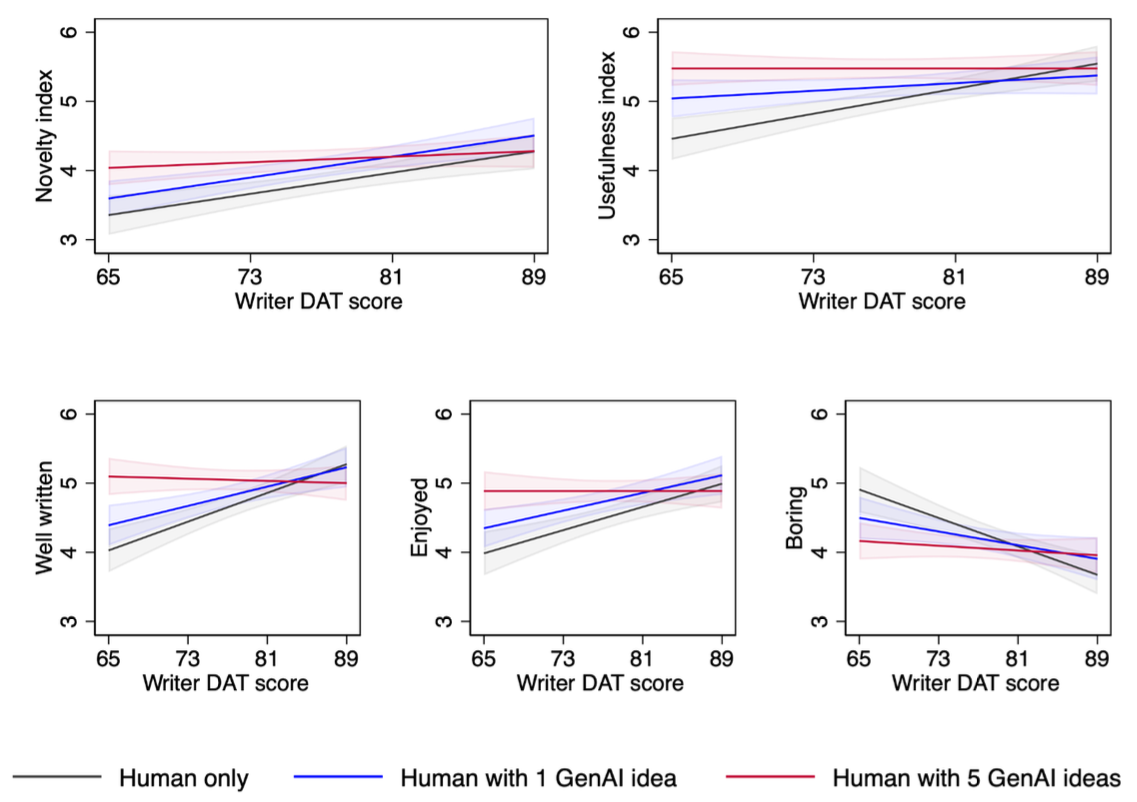

Not remotely. Because when you break the results down by the writers' inherent creativity, the picture changes completely. For writers who scored low on the creativity test, access to five AI ideas produced enormous gains: 10.7% more novel, 11.5% more useful, 26.6% better written, 22.6% more enjoyable, and 15.2% less boring. These are massive improvements. AI turned below-average writers into competent ones.

But for writers who scored high on the creativity test? Access to AI ideas had little to no measurable effect. They were already producing high-quality work. AI didn't make them better because they didn't need the help.

Look at the human-only line. Steep gradient. Without AI, inherent creativity matters enormously for the quality of your output. Now look at the AI-assisted lines. Dramatically flatter. With AI, inherent creativity matters much less. The gap between talented and average writers shrinks to almost nothing across most of the range.

The crossover point, where unaided human writers finally start outperforming what an average person with AI can produce, sits at roughly a creativity score of 81 on the "well-written" dimension. The sample mean was 77.24. That crossover is approximately the top quarter of writers in the study. Which means roughly three-quarters of unaided human writers in this sample never outperformed what an average person armed with AI could produce.

Let that sit for a moment. Three-quarters of writers, working entirely under their own steam, producing work that doesn't beat what someone less talented could produce with AI assistance. That's the equaliser effect, and it maps almost perfectly onto the awards data we looked at earlier. The vast majority of creative professionals are producing work that competent AI could match, and a small minority are genuinely operating at a level that AI can't reach.

Here's the part that should make the "AI is killing creativity" crowd genuinely uncomfortable: the writers in Doshi and Hauser's study couldn't tell the difference. Their self-assessments of their own stories showed no statistically significant differences between conditions. They literally could not distinguish between their unaided and AI-assisted work, despite independent evaluators rating the AI-assisted stories as meaningfully better.

This is textbook Dunning-Kruger in a creative domain, and it's supported by Urban and Urban's 2024 research in Thinking Skills and Creativity, which found that people with low creative ability but high creative self-concept systematically overestimate their own creativity[11]. In other words, the people most convinced of their own creative brilliance are often the least equipped to evaluate it. And when AI makes them measurably better, they don't even notice.

I want to break from the research again because there's a practical dimension to this that I think the "every word must be handcrafted" crowd reveals something about themselves by ignoring: they've never worked at enterprise scale.

I've worked on clients running massive content programs, multiple websites, multiple brands, multiple markets. Do you think Samsung has a literature graduate lovingly handcrafting unique product descriptions for every SKU across every market? Of course not. You're talking thousands or tens of thousands of pages needing to be created at speed. The way that gets done is heavy templating. You give a template to a room of university grads and tell them to write ten product pages a day. Or you run programmatic content at scale, boilerplate descriptions with keyword insertions, placeholder swaps, title variations, the way e-commerce sites with thousands of products have done it for years.

Nobody was calling that "creative." Nobody was mourning the death of artisanal copywriting when it was a 22-year-old following a template at 4pm on a Friday. But AI doing the same job, arguably better because it at least writes something fresh each time rather than mechanically filling slots? Suddenly that's "slop." Suddenly it's a crisis.

The people arguing that every single piece of content should be handwritten are exposing themselves as cottage-industry copywriters who've never had to deliver at scale. The luxury of bespoke doesn't exist when you're running content across dozens of markets for an enterprise client. It never did. AI isn't replacing artisanal craft in these contexts, it's replacing the template-and-grad pipeline that was already doing the work. And it's probably doing it better, because Doshi and Hauser just showed us that AI makes average writers produce above-average work, which is exactly the uplift you want when you're operating at volume.

The irony is thick enough to choke on: the same industry that built massive content factories staffed by junior writers following rigid templates is now telling us that AI-generated content is an existential threat to creativity. And the agencies got paid handsomely to produce it, charging retained fees justified by the headcount needed to fill templates at scale. The slop they're worried about looks suspiciously similar to the slop they were already producing. They just could charge more for it.

There's one more piece of research that completes the picture, and it comes at the question from a completely different angle. Zhou, Lee and Gu tracked 31,076 digital artists over 27 months across the release of mainstream AI image generation tools like Stable Diffusion, and published their findings in Science Advances in early 2025[12].

What they found is that a concentrated subset of AI-assisted creators, the "masterminds," drove the majority of genuinely novel contributions to the creative frontier. The broader base, the "hive," produced more work thanks to productivity gains, but the average rate of truly novel contributions actually decreased. More output, less originality. A dilution effect.

On the surface this might seem to complicate the equaliser narrative from Doshi and Hauser, but actually it's saying the same thing from the other direction. True creativity, the kind that pushes boundaries and creates genuinely new work, is concentrated in a small elite, with or without AI. The middle, which is where most professionals sit, can use AI to produce more competent work, faster. But they're not producing anything genuinely original. They're producing more of what's competent.

Part of the reason I find this whole debate so personally irritating is that my wife is an artist. Not a "creative" in the advertising sense, an actual artist, trained in fine art, who paints and sells her paintings. Via her, I have access to a world of painters, sculptors, and artists of various kinds, and when I see their work in galleries and exhibitions I feel something that no amount of competent marketing copy has ever made me feel. The people attempting to conflate that kind of creativity with the production of blog posts and banner ads are flattering themselves beyond all recognition. And here's the thing: the genuinely creative people I know, my wife included, are the ones most actively exploring how AI can make their practice more efficient and more interesting. They're not threatened by it. They're curious about it. Because they know the thing they do isn't the thing AI replaces.

AI doesn't change who's creative. It just makes the distinction between creative and competent impossible to ignore.

So here's where we land, and I want to be constructive about this because leaving the argument at "you're all mediocre" is satisfying but not useful.

Layer one: the advertising industry was already mediocre by its own standards. The awards data proves it. The effectiveness data proves it. The collapsing Binet and Field multiplier proves it. This predates AI by decades. The process by which ideas are generated and selected structurally rewards safe, derivative work. The industry is comfortable with this because the people who benefit from the status quo are the same people who get to decide what's "creative."

Layer two: AI makes this gap visible by giving everyone access to competent output. Doshi and Hauser showed that AI turns below-average writers into above-average ones, but does almost nothing for the genuinely talented. Zhou, Lee and Gu showed that the creative frontier is still pushed by a concentrated few, while the broader base produces more competent work but less novel work. The floor has risen. The ceiling hasn't.

Here's the synthesis, and it's the uncomfortable bit: the people most upset about AI in creative work aren't the genuinely brilliant ones. They're the ones in the middle who were coasting on the fact that competence used to be scarce enough to be valuable. When you could charge a premium just for being able to string a sentence together or produce a half-decent layout, the bar was low enough that a lot of people could clear it and call themselves creative. AI raised that bar, and suddenly "competent" isn't enough to justify the rates.

The same industry that is loudest about AI slop is the industry that has been producing the most formulaic, lowest-common-denominator content for decades. Banner ads, retargeting creative, email blasts, instagram carousels, listicle blog posts, none of it was high art before GPT-4. The irony, and I think it's a genuinely beautiful irony, is that AI slop looks like advertising slop because that's what it was trained on. The machine learned mediocrity from the people now complaining about mediocrity. The slop is coming from inside the house.

If AI demonstrably raises the floor on competent work, and the floor was already embarrassingly low, then maybe the answer isn't to fight AI to protect mediocre human output. Maybe it's to stop pretending that the mediocre human output was ever worth protecting.

For the minority who are genuinely creative, the data is clear: AI doesn't threaten you. Doshi and Hauser showed that high-creativity writers see no measurable change in output quality with AI assistance. The top tier remains the top tier. If anything, AI frees you from the grunt work so you can spend more time on the stuff that actually requires talent.

For the majority who are competent but not exceptional, and I include myself in this category on most days, the opportunity is real but it requires honesty. Use AI to raise your baseline. Use it to handle the template work, the first drafts, the scaled content programs that were never going to be handcrafted masterpieces anyway. But stop pretending that the work you were doing before was creative. It was competent. It was professional. It was fine. But it wasn't the thing you're claiming AI is killing.

For the industry as a whole, maybe the right response to AI isn't to circle the wagons around a creative process that Field's data showed was already failing. Maybe it's to use AI to raise the baseline so aggressively that the average piece of marketing is actually, for the first time, genuinely decent. And then redirect the human talent, the real talent, the top-quarter talent, toward the work that actually needs it. The campaigns that win Grand Prix. The strategies that deliver 12x multipliers. The ideas that push boundaries rather than filling templates.

Stop mourning the death of creativity that wasn't there in the first place. Start using the tools that could actually fix the problem you didn't want to admit you had.

IPA Effectiveness Databank. Over 1,600 case studies since 1980. IPA Effectiveness Databank (EASE). Also referenced in Binet & Field's work and WARC's IPA partnership page. ↩

IPA Effectiveness Awards 2024. 79 entries (highest in three decades), 28 prizes awarded (5 Gold, 7 Silver, 16 Bronze, plus 9 special prizes). 30 agencies hold IPA Effectiveness Accreditation. IPA Effectiveness Awards 2024; Ethical Marketing News coverage. ↩

Cannes Lions 2025. 26,900 entries confirmed. 852 Lions awarded (including 34 Grand Prix). Cannes Lions confirms 26,900 submissions for 2025; 2025 Grand Prix winners (Campaign Brief). ↩

D&AD 2025. 11,689 entries from 86 countries. 668 Pencils awarded (3 Black, 3 White, 48 Yellow, 176 Graphite, 434 Wood). Win rate: 5.7%. D&AD in 2025; Creative Boom coverage. ↩

The One Show 2024. 20,038 entries from 65 countries. 1,790 awards (184 Gold, 206 Silver, 280 Bronze, 1,120 Merits). Win rate: ~8.9%. The One Show 2024 Winners. ↩

System1 Test Your Ad data. Database of 120,000+ ads tested. 60% score 1-Star or 2-Star. Only 4% achieve 5-Star. System1 Test Your Ad. ↩

WARC ROI Benchmarks. Analysis of 1,394 case studies. Profit ROI range from 1.9:1 to 2.5:1 among successful campaigns. WARC: From 1.9:1 to 2.5:1. ↩

Marketing Week, 2024 Language of Effectiveness survey (1,200+ respondents). 34.2% of marketers say their company rarely or never measures marketing ROI. Marketing Week: Over a third of brands don't measure marketing ROI. ↩

Peter Field, "The Crisis in Creative Effectiveness," IPA/Cannes Lions, 2019. 24 years of IPA Databank data. Awarded campaigns: 12x efficiency advantage (1996–2008) collapsing to 4x (2006–2018). Marketing Week coverage; Full PDF (ThinkTV Canada). ↩

Doshi, A.R. & Hauser, O.P. (2024). "Generative AI enhances individual creativity but reduces the collective diversity of novel content." Science Advances, 10(28), eadn5290. 293 writers, 600 evaluators, 3,519 evaluations. Science Advances (full paper). ↩

Urban, K.K. & Urban, M.K. (2024). "How does the Dunning-Kruger effect happen in creativity? The creative self-concept matters." Thinking Skills and Creativity, 54, 101667. ScienceDirect. ↩

Zhou, E.B., Lee, D. & Gu, B. (2025). "Who expands the human creative frontier with generative AI: Hive minds or masterminds?" Science Advances, 11, eadu5800. 31,076 digital artists, 27 months. Science Advances (full paper). ↩

Bernadette (VCCP). "Daisy, our AI Granny, has won 5 Cannes Lions." Bernadette. ↩